Bringing Real-Time Audio to a Public Benefit Corporation Messaging Platform with Elixir WebRTC

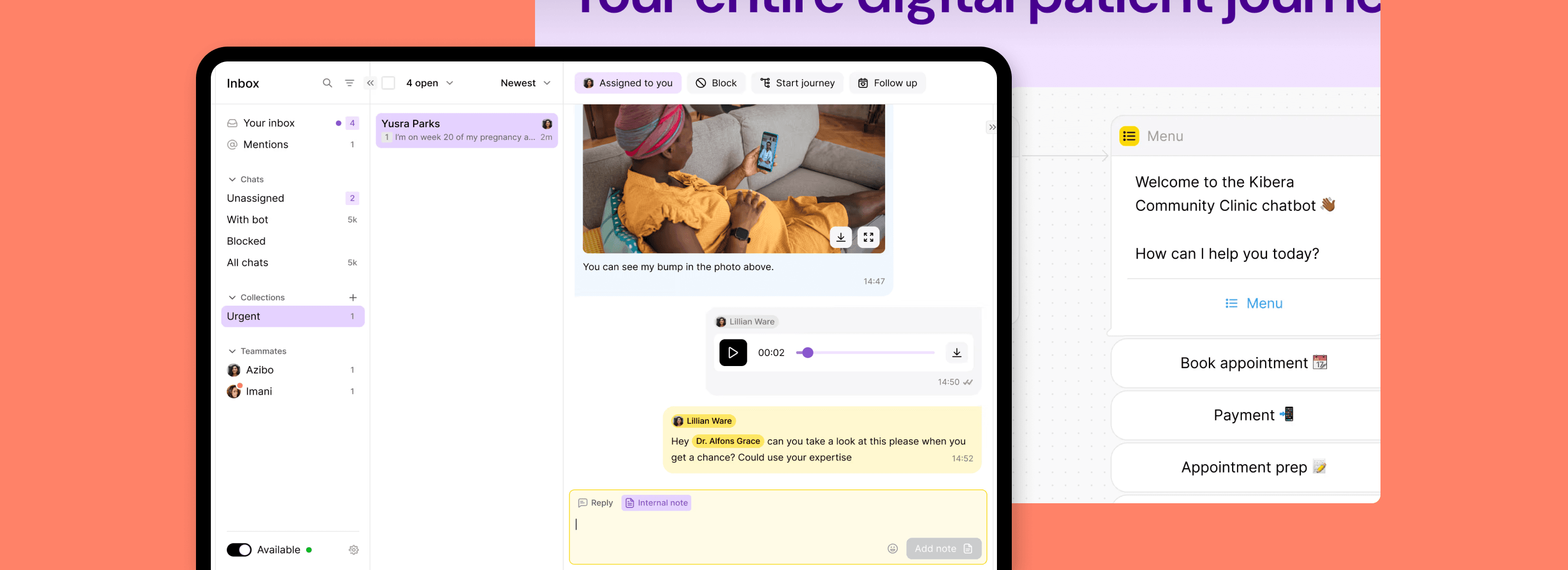

Turn.io is a Public Benefit Corporation and the first impact WhatsApp service. It helps organizations and governments connect underserved communities with health workers, NGOs, and ministries.

After discovering Elixir WebRTC during Elixir Stream Week, the client reached out to us for help. At that time, their platform, writtten in the Phoenix framework, LiveView, and React, was already capable of handling large-scale, text-based communication. What they needed was a reliable way to support real-time voice calls within the existing infrastructure.

During our collaboration, we expanded the platform to support browser-to-Turn.io-to-WhatsApp voice calls, added live transcriptions, and built an AI assistant.

- Integration with WhatsApp WebRTC API

- Routing audio traffic between WA and web browser via Turn.io

- Voice transcription & AI assistant integration

- WebRTC calls debugging & observability

- Phoenix Framework

- LiveView

- Elixir WebRTC

- LiveDebugger

- OpenAI

- Kubernetes

- Google Cloud Platform

- Amazon Web Services

Goals & challenges

Our goal was to bring real-time audio to Turn.io’s browser-based platform, enabling agents to seamlessly connect with people using WhatsApp on their phones. The project involved:

- Integration with WhatsApp WebRTC API and relaying audio traffic between WhatsApp and web browsers (via Turn.io)

- Adding live transcription

- Implementing an AI assistant and the possibility to switch between AI and human operators

- Extending Elixir WebRTC to support receiving DTMF (Dual-Tone Multi-Frequency) tones over WebRTC

- Ensuring connectivity for users across all types of networks

- Capturing statistics and debug information for future analysis

Building audio calling with WebRTC

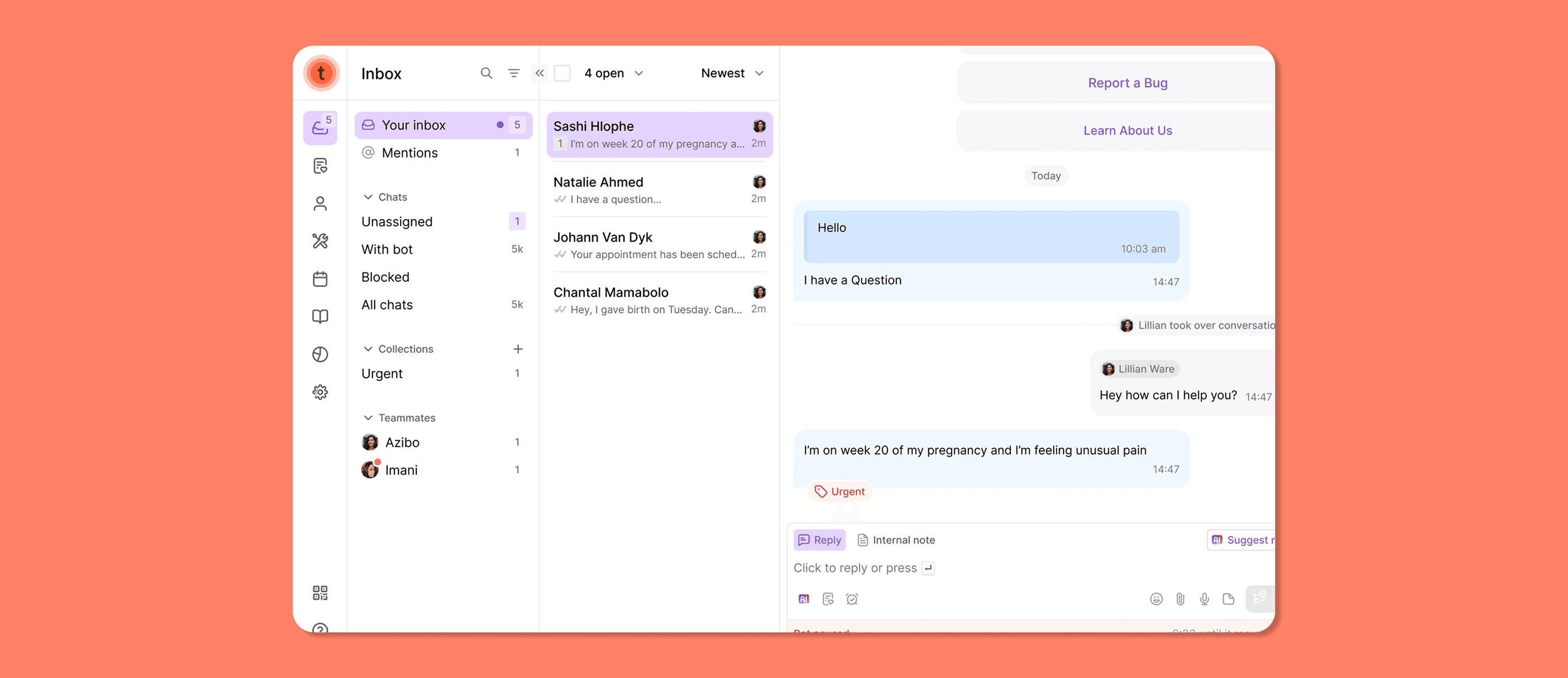

Turn.io’s platform consists of both a web-based frontend (where agents and consultants view and respond to messages) and a backend server that handles message routing by receiving messages from WhatsApp, delivering them to the browser, and sending replies back to WhatsApp.

When we joined the project, Turn.io had already been operating for several years, with the whole code and infrastructure for high-volume text messaging already in place. Our role was to extend this foundation by establishing WebRTC connections for real-time audio. This involved connecting WhatsApp to the server and the server to the browser, enabling audio to flow smoothly in both directions.

For this purpose, we used Elixir WebRTC – a batteries-included WebRTC implementation for the Elixir ecosystem. It follows the W3C WebRTC specification, providing the same API as web browsers but expressed in an idiomatic Elixir style. Every peer connection runs in its own Elixir process, and every call can include up to four peer connections to:

- the web browser

- the OpenAI for the WhatsApp transcript

- the OpenAI for browser transcript

These connections are managed by another Elixir process called CallRouter, responsible for routing audio packets between them.

Improving reliability & debugging

To ensure that Turn.io’s voice calling feature works reliably across a wide range of network conditions, we focused on both improving the WebRTC connection establishment process and improving visibility into call performance.

As part of this, we implemented a statistics collection using get_stats(), with data stored in the database via the Erlang External Term Format. Based on the W3C WebRTC specification, these post-call metrics, among others, include:

- bytes and packets sent/received

- retransmissions

- NACKs

- ICE connectivity data – including local and remote candidates and the ICE candidate pair grid

- SDP offer/answer details

Thanks to it, when the client team observes unusual behavior, they can easily download a diagnostic file and share it with the Turn.io developers, speeding up the debugging process.

Since everything was deployed in Kubernetes on GCP and AWS, we also employed Cloudflare’s TURN servers for the most restrictive network providers (e.g., Orange or WindTre, which use Symmetric NAT). For alternative deployments on bare machines (e.g., Hetzner without K8s), this additional step typically isn't required, provided UDP traffic isn’t restricted for client connections.

As part of our reliability work, we encountered a specific issue with Orange ISP: the DTLS (Datagram Transport Layer Security) certificate was exceeding the network’s MTU (Maximum Transmission Unit) and was being dropped en route. Since DTLS is essential to WebRTC encryption and negotiation, this caused the peer connection to fail. To resolve it, we improved our DTLS-SRTP implementation by supporting certificate fragmentation and setting the MTU to 1200 bytes, following WebRTC recommendations. This change ensures large certificates are delivered reliably, even in constrained network conditions.

Last but not least, the Turn.io team started using LiveDebugger to make debugging smoother and more efficient. LiveDebugger is our open-source browser-based tool for inspecting and debugging applications built with Phoenix LiveView. It gives the client’s developers a clear, real-time view into the internal state of the platform, helping them spot and resolve issues faster.

Helping implement AI features

Once audio calling was up and running, the next phase of the project focused on enriching Turn.io’s platform with AI-based features.

Enabling real-time transcription

The first step in bringing artificial intelligence to Turn.io platform was adding live transcription. To improve note-taking and create a clear interaction history, we integrated Turn.io’s audio pipeline with OpenAI to transcribe user-agent conversations in real time, enabling consultants to see a live text version of calls in the browser. A key part of this process was establishing a dedicated WebRTC connection between Turn.io and OpenAI, where Turn.io streams audio via RTP packets, and OpenAI returns transcripts in real time over data channels.

Since Turn.io uses ElixirWebRTC, while OpenAI relies on Pion – a WebRTC implementation in Go – the integration came with a few challenges. Although both libraries follow the same standard, subtle differences meant they didn’t interoperate well out of the box.

The main problem was ICE’s aggressive nomination. Pion requires a pair to be nominated by the controlling side before it can start sending any data. Because Elixir WebRTC uses regular nomination, the nomination process happens once all connectivity checks pass, which may take several seconds, delaying the whole flow of data.

To fix that, we added support for aggressive nomination on the controlling side in Elixir WebRTC and made it configurable, speeding up connection establishment time to a minimum.

Developing an AI assistant

To boost efficiency and help more Turn.io users, the next step was enabling conversations with an AI assistant. Instead of going straight to a human agent, callers can first speak with a bot that understands questions, provides basic answers, or even triggers specified backend actions using OpenAI’s function calling.

The implementation was very similar to live transcription, using OpenAI’s WebRTC API. The main challenge was enabling a smooth handover between AI and human operators. From the WhatsApp user’s perspective, this transition needs to happen seamlessly, without restarting the connection or introducing unnecessary delays. To achieve that, we had to ensure that the RTP packet sequence numbers and timestamps sent to WhatsApp remained continuous, even after the switch. Fortunately, Elixir WebRTC includes RTP Munger, a tool specifically designed for this kind of stream manipulation. This smooth handover was essential to ensure that callers aren’t left stuck with the bot when real human help is needed.

We also supported Turn.io in implementing pre-recorded messages, which are especially useful for sharing important general information with callers before they're connected to a human agent or AI assistant. A helpful reference for this feature is our send_from_file example.

Working on DTMF support

Since OpenAI often struggles to understand spoken numbers, we helped Turn.io use DTMF (Dual-Tone Multi-Frequency) to improve the platform's reliability in situations when users need to share IDs or reference codes during calls with AI assistants. The key part of this feature was implementing DTMF tone reception directly in Elixir WebRTC and exposing it through the API for use in business logic. As a result, callers can press digits on their phone keypad during a call, and these inputs are sent as events via RTP packets, reducing integration complexity and friction on the Turn.io side.

This gives users a reliable and familiar way to enter numbers, making the experience smoother and reducing frustration when precision matters.

Results

We’re really happy to have helped Turn.io make the real-time voice chats over WhatsApp smooth and reliable.

- The audio calling feature is currently used by several of Turn.io’s partner organizations.

- In the first weeks after the introduction of audio calls, weekly volume grew by over 250% – from around 20 to more than 70 calls.

- Support for TURN servers and DTMF tones improved platform accessibility and reliability across varied network conditions.

- The implemented post-call statistics storage helps the Turn.io team troubleshoot issues faster and gain deeper insights into platform performance.