Introducing ExVision

Daniel Jodlos•Jun 4, 2024•4 min read

Daniel Jodlos•Jun 4, 2024•4 min readTL; DR We’re releasing a new library for Elixir with some vision-related AI.

Our team is delighted to announce the initial release of ExVision, a collection of computer vision AI models for Elixir featuring high-level API. In this initial release, ExVision features models for object detection, semantic segmentation, and image classification.

ExVision is capable of accepting a variety of input types, including image paths, Nx Tensors, and Vix.Image (type used by:image library). It will also automatically perform all of the necessary input preprocessing, as well as output post-processing.

ExVision is primarily meant for people who aren’t yet very familiar with the field of AI. Our main focus is ease of use, useful examples, and good documentation. This point is best explained using an example.

{:ok, model} = ExVision.Classification.MobileNetV3.load()

ExVision.Model.run(model, "cat.jpg") #=> %{cat: 1.0, dog: 0.0, ...}This small snippet of code is all it takes to load the model and run inference on it.

Why does it exist?

The Elixir ecosystem seems to be lacking in near real-time capable models. Bumblebee is offering some vision-related models, but they are not the specific variants designed for low inference times necessary for live, or near-live, video processing, like, eg: the MobileNetV3 models family. Here at Software Mansion, we’re dealing a lot with multimedia in the space of Elixir and therefore, we needed these faster models. That’s how ExVision was born and now we decided to share it with a wider audience.

What can it do?

In this initial release, ExVision contains one model each for three classes of commonly performed vision tasks:

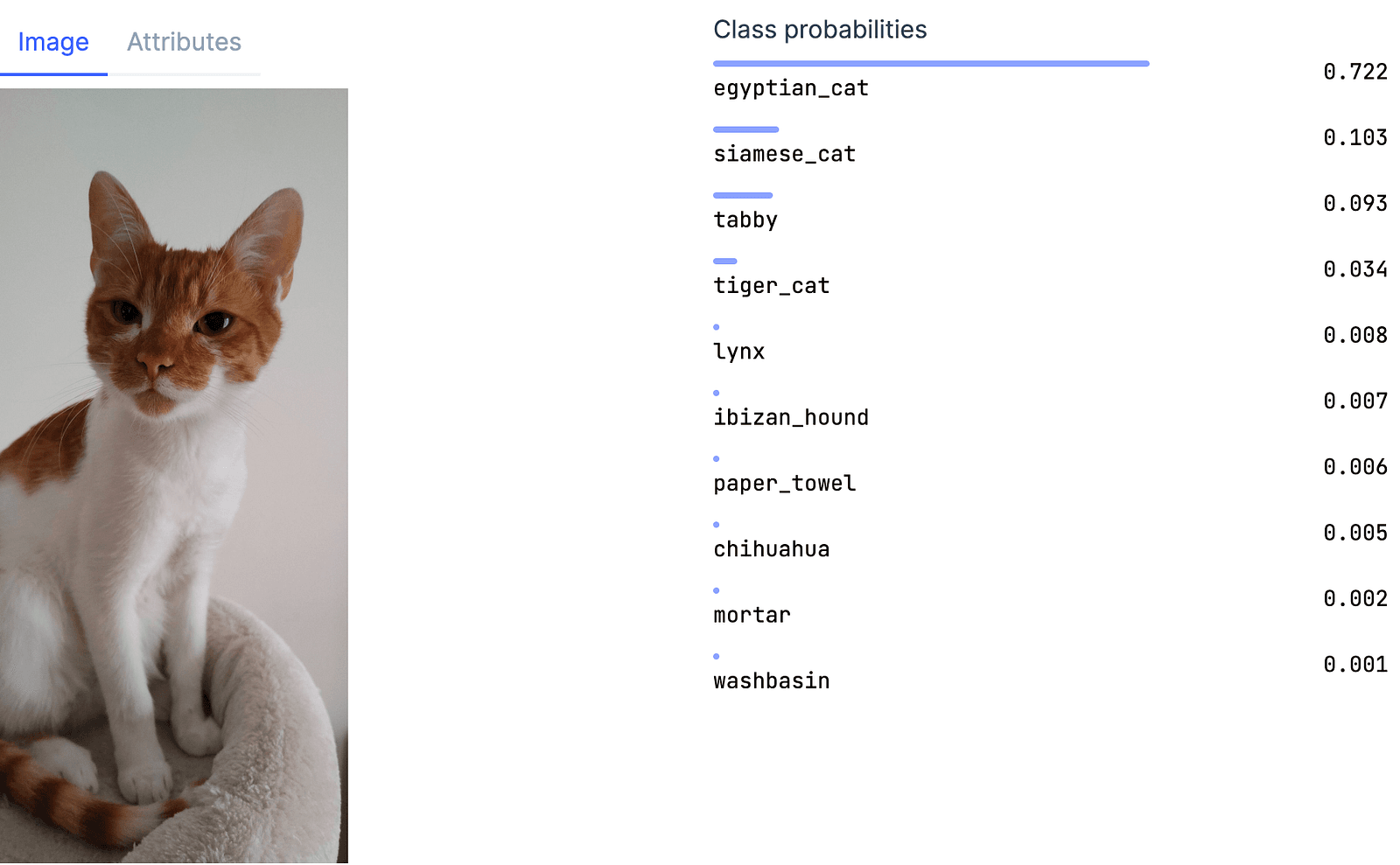

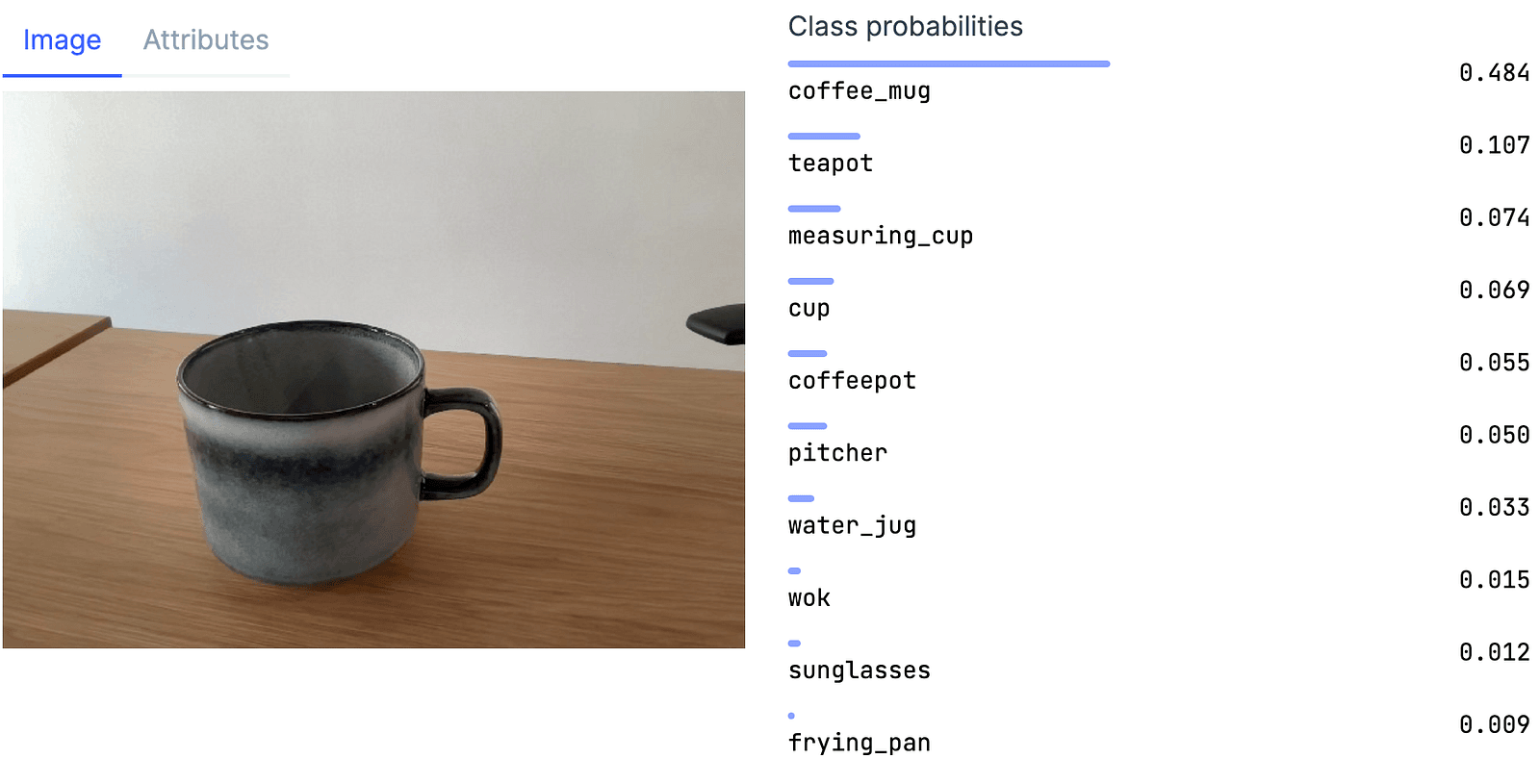

- Image classification

- Object detection

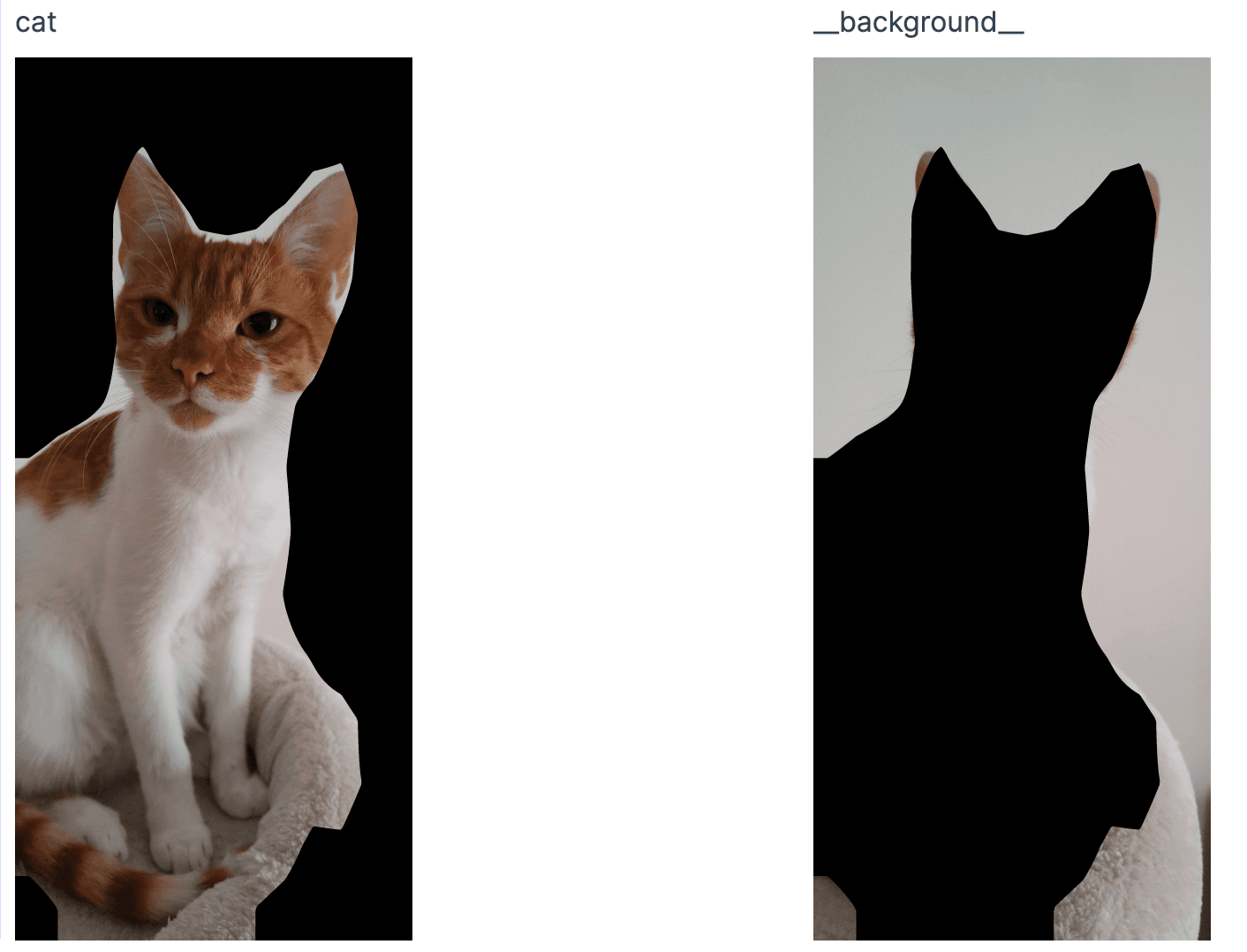

- Semantic segmentation

All ExVision models are essentially a thin abstraction layer over the Nx.Serving, including full support for the process workflow. This allows ExVision’s models to be easily used in the production environment, by including it in your Supervision tree. They also support all of the same configuration options as the aforementioned Nx.Serving

children = [

{ExVision.Model.Classification.MobileNetV3, name: MyImageClassifier}

]

Supervisor.start_link(children, strategy: :one_for_one)

# The model is ready for inference

ExVision.Model.batched_run(MyImageClassifier, "cat.jpg") #=> %{cat: 1.0, dog: 0.0, ...}

ExVision handles common types of inputs as well as all of the necessary preprocessing, including:

- Nx.Tensors

- Images (using

:imagelibrary) - Paths to images

Why not Bumblebee?

ExVision is not trying to compete with Bumblebee. Bumblebee is focused on integrating with models found on HuggingFaces, while ExVision is a place for all different kinds of models, regardless of their nature. All kinds are welcome. Currently, ExVision models aren’t native to Elixir, they’re running in ONNX through Ortex.

Why ONNX models?

ONNX is a cross-platform model execution engine and model serialization protocol. We have chosen ONNX primarily due to one key point: it’s possible to (relatively easily) export PyTorch models straight to ONNX and use them in ExVision with just a few lines of code, without the need to implement the model in Elixir. That enables us to provide a much wider variety of models to the end user.

That said, we recognize that ONNX isn’t perfect and is both well-loved and hated in the AI community for its various quirks. While we’re currently running ONNX, ExVision is in no way tied to it and we will consider adding Axon-implemented models if the need arises.

Where can I try it out?

ExVision is now available for download on Hex, where you can also find the documentation, as well as Livebook examples showcasing the usage of ExVision models.

Future plans

In this initial release of ExVision, we’re providing a small set of functionality and we want to hear from you what else you would like to see here. In the short term, we’re planning on improving the capabilities of ExVision by expanding the model library.

We plan on developing this library without losing sight of our main objective of simplicity and intuitiveness. There may be parts that we got wrong and we would love to hear from you on our Github. All questions, suggestions, bug reports, ideas, and pull requests are most welcome.